A Dispatch from the Quiet Corridors

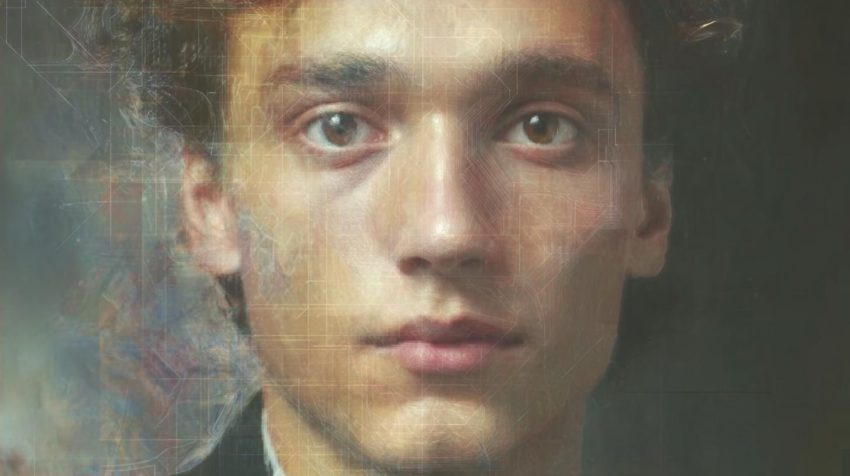

Inside the hush of a windowless lab I’ll simply call GNTC, we stare at screens until the pixels give up their secrets. Think of “GNTC” here as a composite label, a shorthand for the places and people who study how truth fractures online. My vantage is not about theatrics or grand conspiracies; it’s the day-to-day of pattern analysis, datasets that never sleep, and the steady hum of forensic models at work. I’m writing about AI deepfakes and misinformation because they’re no longer edge cases. They’re infrastructure—woven into political persuasion, media manipulation, and scams so personal they’ll make your skin crawl.

What follows is a map. It traces where synthetic media does the most harm, how we’re building countermeasures, and how you—reader, voter, colleague, parent—can stay upright when the ground of evidence turns to sand. Along the way, I’ll thread through the landscape of AI deepfakes in political campaigns, Deepfakes in social media platforms, and the Role of AI in combating deepfakes, because the fight isn’t just technical; it’s civic and human.

The Problem in Fresh Light

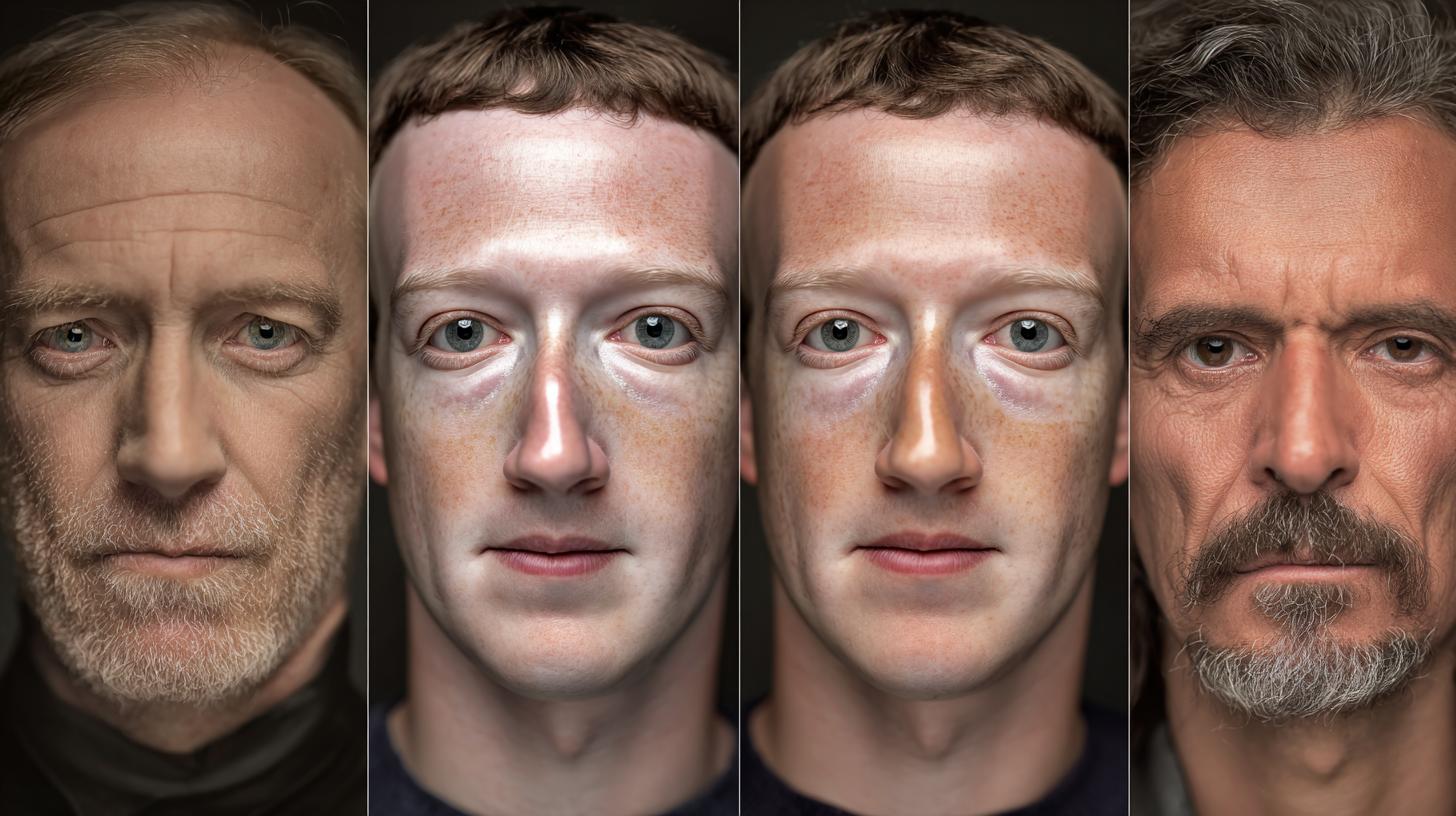

We used to worry about rumors. Now we contend with fabricated voices and faces wearing authority like a suit. Misinformation spread via deepfakes hits different because our brains are wired to trust sight and sound. When a plausible video slips into your feed—polished lighting, a confident voice, a watermark that looks authentic—you don’t run a forensic lab in your head. You react. That’s where the Social impact of viral deepfakes bites hardest: microseconds of belief harden into opinion, and opinion steers action.

Every week, we see AI deepfakes global misinformation trends flare in new forms: a candidate saying words they never said, a CEO issuing a fake apology, a diplomat’s voice cloned to seed panic. In our logs, Deepfakes and public trust erosion are not abstractions. They’re drops in institutional credibility, one manipulated clip at a time. The Impact on democracy from deepfakes is not just about swaying voters; it’s the dull ache of learned helplessness—when people conclude nothing can be known for sure and stop trying.

Where They Hit Hardest

Politics and Elections

Election season is a petri dish. AI deepfakes in political campaigns arrive late at night, just before a debate or a vote, designed for maximal chaos. The Impact of deepfakes on elections thrives on timing: publish, go viral, retract, and let the narrative rot slowly. We’ve studied the telltale choreography—sockpuppet accounts, coordinated shares, and commentary primed to nudge outrage over the edge. Combine that with Deepfake voice cloning dangers, and you can place a phone call from a “campaign manager,” shift a talking point, or drop a false concession speech that rattles markets before dawn.

We need more than ad hoc responses. Government regulations on deepfakes are maturing, but real safety stems from layered Regulatory frameworks for AI deepfakes: clear labeling standards, rapid takedown protocols for malicious content, and carve-outs that protect satire and legitimate art. International treaties on deepfakes will matter too, because cross-border operations exploit jurisdictional gaps. National security teams already treat Deepfake implications for national security as a standing threat: a fabricated troop movement speech or an altered clip of a minister can spike tensions in hours.

Journalism, Markets, and the Information Core

Newsrooms used to verify quotes; now they verify genomes of pixels. AI deepfakes in journalism are a stress test for editorial workflows. Pre-publication checks often run Detecting deepfakes with AI tools alongside old-school reporting: call-backs, source triangulation, and chain-of-custody verification for files. If you want to feel your stomach sink, watch a plausible “leak” ripple across sites while editors scramble with AI-generated fake news detection modules that flag probability scores, not certainties.

Markets move on breath and rumor. When a forged press release hits a wire service or a deepfaked executive appears in a livestream, Deepfakes affecting stock markets can unfold in minutes. We have case studies where seconds of fabricated “guidance” were enough to trigger algorithmic trades and whipsaw a ticker. Elsewhere, Deepfakes in historical revisionism tug the past into new shapes—ginning up “evidence” that a massacre was staged or a leader adored a policy they opposed. The result isn’t just confusion; it’s narrative capture.

Entertainment, Ads, and the Celebrity Machinery

Deepfake videos of celebrities travel faster than apologies. Studios and agencies live with the whiplash—some work with artists to archive likeness rights, others publish explicit consent frameworks. Meanwhile, AI deepfakes in advertising dangle the promise of dynamic personalization while flirting with the line of deception. When a beloved actor “endorses” your product, are we in parody territory, or have we crossed into Deepfakes and fake endorsements meant to mislead?

Courts are only beginning to find their feet. Expect more Deepfake lawsuits in US courts over Privacy rights violated by deepfakes, right-of-publicity claims, and the Legal consequences of creating deepfakes used to defame or harass. If a campaign or a brand negligently amplifies synthetic slander, the question of AI companies liability for deepfakes may not stay hypothetical for long. Add licensing knots around training material, and you can feel the industry inch toward standardized provenance tags and rights registries.

Personal Harms, Scams, and the Private Sphere

The ugliest frontier is intimate. Deepfake porn ethical issues have moved from lurid novelty to mainstream menace. Even low-fidelity swaps can crater a person’s life—workplace whispers, family fallout, a permanent search result you didn’t consent to. Pair that with Deepfakes in cyberbullying, and you’ll find teenagers pretending composure while their classmates swap doctored clips in group chats.

Criminals get practical. We see Deepfake scams targeting individuals where an attacker clones a voice to demand an emergency wire transfer, or Deepfakes in online dating scams drip-feed affection while farming authentication codes. Deepfakes and identity theft now includes facial reenactment to bypass selfie checks. It’s not just vision—Deepfake audio manipulation risks can dupe call-center agents who trust a cadence more than a case number. The Psychological effects of deepfakes are measurable: anxiety spikes, sleep wrecked, a feeling that you no longer own your face.

Under the Hood: A High-Level Look

At a safe altitude, here’s what matters. AI algorithms for deepfake generation typically stitch together multiple components: encoders that compress identity traits, decoders or diffusion models that paint them back with alarming fidelity, and post-processing pipelines that color-match, blur edges, and smooth motion. Modern systems learn expressions as distributions—how a smile curves given bone structure and lighting—so they can translate one person’s performance onto another’s canvas. It’s not magic, just math with too much training data and compute.

Which leads to Deepfake training datasets concerns. If your dataset scrapes faces at scale without consent, you’ve built your tech on a rights problem. Bias rides along too: underrepresented groups often get worse quality or stranger artifacts, which can double as both harm and detection hook. Meanwhile, the speed curve shortens. Cloud platforms and open-source code make it easier to tinker without knowing the guts, but high-grade results still take skill, time, and iterative tuning. That’s the thin good news: real artistry is hard to counterfeit at scale.

Detection, Forensics, and Proof of Origin

People ask for a silver bullet. We don’t have one. Detecting deepfakes with AI tools works best as an ensemble: forensic cues in the pixels, provenance in the file’s history, and behavioral checks around who posted it, when, and why. Deepfake forensics techniques examine compressed noise, lighting inconsistencies, and physiological signals that are stubborn to fake—subtle pulse reflections in the skin, blink dynamics, and micro-expressions. On the provenance side, AI watermarking for deepfakes and cryptographic signatures help mark content at birth, while content credentials travel with the file like a tamper-evident seal.

Consumer help matters too. Tools for verifying deepfake content now include browser extensions that surface source chains, platform-integrated provenance badges, and dedicated analysis portals. Deepfake detection apps reviews tend to focus on three things: accuracy on current model families, robustness to compression and cropping, and transparency around false positives. We recommend a workflow mindset: stack tools, log your checks, and compare outputs before you stake a claim.

| Detection Layer | What It Checks | Strengths | Limitations | Best Used For |

|---|---|---|---|---|

| Forensic AI | Artifacts, lighting, biological signals | Works even without provenance | Arms race with new models; false positives possible | High-stakes verification of suspect clips |

| Provenance/Watermarking | AI watermarking for deepfakes, content credentials | Fast, scalable, supply-chain friendly | Breaks if stripped; adoption varies | Platform-level authenticity checks |

| Contextual/Behavioral | Source account, timing, coordination | Catches campaigns, not just files | Inferential; needs corroboration | AI deepfakes and misinformation campaigns |

How to Spot AI Deepfakes

You don’t need a lab to do first-pass checks. Train your eyes and ears, then escalate if needed. Here’s a practical routine you can run in under two minutes.

- Look for edges: jawlines that shimmer, hair that blurs into the background, earrings that warp during head turns.

- Watch lighting: shadows that don’t align, specular highlights that jump between frames.

- Track breath and blink: irregular blinks, a smile that doesn’t crease eyes, cheeks that don’t rise.

- Listen closely: room acoustics that stay static while the “speaker” moves, syllables that smear.

- Cross-verify: search for the same clip from authoritative accounts; check timestamps and captions across platforms.

- Use tools: run it through multiple Tools for verifying deepfake content; compare outputs, not just single scores.

Media literacy education on deepfakes is the scalable answer. People don’t need a PhD; they need habits. That’s why Public awareness campaigns against deepfakes emphasize both skepticism and reportage: pause, verify, then share responsibly or report malicious content.

Law, Policy, and Corporate Responsibility

We’re seeing a doctrinal weave form across jurisdictions. Government regulations on deepfakes increasingly target malicious impersonation, election interference, and undisclosed synthetic endorsements. Regulatory frameworks for AI deepfakes work when they’re specific: mandating disclosure labels for certain contexts (ads, political content), shielding satire, and fast-tracking orders to remove non-consensual intimate imagery. On the global stage, International treaties on deepfakes are nascent but necessary to address coordinated campaigns flowing across borders and platforms.

Meanwhile, litigators are busy. Early Deepfake lawsuits in US courts invoke defamation, right of publicity, copyright, and state-level deepfake statutes for election seasons and explicit content. Courts are warming to injunctive relief—the ability to order quick takedowns and prohibit re-uploads—which matters when the viral half-life is measured in hours. For firms shipping generative tools, the question of AI companies liability for deepfakes sits at the edge of product design and duty of care. AI ethics boards on deepfakes are pushing for defaults that reduce harm: opt-in watermarking, safety classifiers that flag intimate or political synthesis, and rate limits on risky features.

Markets, Security, and Democracy

Some risks are systemic. Deepfakes affecting stock markets play a speed game against automated trading systems tuned to news. A small perturbation can widen into a flash crash. Exchanges and data providers are beginning to verify source integrity as part of their intake hygiene, but it’s a race. In defense and diplomacy, Deepfake implications for national security include information ops that manufacture “proof” of atrocities or create confusion during crises. The cost to debunk is always higher than the cost to produce. That asymmetry is the core strategic advantage of malicious actors.

Which brings us back to civic health. The Impact on democracy from deepfakes isn’t meltdowns; it’s fatigue. If a critical mass of voters believes everything is fake, then bad actors don’t need to convince anyone of a lie—they only need to persuade them that truth is unknowable. That’s how you dampen turnout, delegitimize oversight, and normalize apathy. Countering that trend demands fast, credible corrections, transparent methods, and consistent enforcement against networks that launder synthetic falsehoods through Deepfakes in social media platforms.

2026 on the Horizon: Advances and Defenses

The phrase Deepfake technology advancements 2026 looms as both promise and warning. If current trajectories hold, you can expect better lip synchronization under occlusion (hands, microphones), more stable side profiles, and improved audio-visual alignment that eliminates uncanny lag. Tooling will likely make one-click voice-to-face pipelines common, further lowering the barrier to entry. On the flip side, AI research on anti-deepfake tech is sprinting too, tuning multimodal detectors that check audio, video, and text context in one pass.

We’re also mapping Deepfake mitigation strategies 2026. Most are incremental but meaningful when layered:

- Default provenance: platforms commit to content credentials on all uploads, with visible badges for verified source material.

- Cross-platform alerts: interoperable signals so a debunk in one network travels to others in near real-time.

- Election guards: heightened scrutiny windows for AI deepfakes in political campaigns with rapid review lanes and temporary throttles for virality pending verification.

- Model fingerprinting: silent patterns embedded in generations that detectors can ping, distinct from visible AI watermarking for deepfakes to withstand casual stripping.

- Incident drills: newsroom and exchange simulations rehearsing response to synthetic news, supporting AI-generated fake news detection at operational speed.

Platform Practices and Public Habits

Deepfakes in social media platforms will not vanish, but friction helps. Rate limits, share-with-comment nudges on suspect media, and clear labels reduce knee-jerk amplification. For users, Media literacy education on deepfakes folds into everyday browsing. Treat media like a claim, not a conclusion. Platforms can boost Tools for verifying deepfake content into share flows, showing authenticity signals without breaking the scroll.

Public awareness campaigns against deepfakes work when they avoid scolding and show practical wins: a fake neutralized, an identity restored, a community that refused to spread a lie. Ethical AI development against deepfakes is not a slogan; it’s a set of defaults: conservative releases for risky features, red-team tests for Deepfakes in online dating scams and enterprise fraud, and research partnerships with watchdogs who test models against real-world abuse.

Applied Scenarios and What to Do

Let’s ground this in common cases we watch every day. If your team handles ad buys, beware AI deepfakes in advertising that borrow a public figure’s face without permission. Disclosure is not enough if the intent is to sow confusion. In newsrooms, treat previously trusted sources with a second check during high-stakes cycles—build a short SOP to route questionable clips through Detecting deepfakes with AI tools before publication. In corporate communications, keep voice verification backstops: known phrases, codewords, or secondary channels for approvals to blunt Deepfake audio manipulation risks.

| Threat | Common Vector | Immediate Response | Longer-Term Guardrail |

|---|---|---|---|

| Fake CEO video | Leaked “apology” on microblog | Freeze media buys; verify source; issue holding statement | Provenance tags on official videos; crisis drill with AI checks |

| Election eve smear | Group chats and fringe sites | Flag to platforms; coordinate trusted messengers to debunk | Pre-bunk campaigns; rapid review lanes with watchdogs |

| Intimate image abuse | DMs, burner accounts | Document; report; seek takedown and legal support | Platform-level hashing; faster legal orders; counseling access |

| Wire fraud via voice clone | “Emergency” call to finance | Hang up; call back on known line; require multi-person approval | Voice challenge-response; payment holds for unusual requests |

Ethics, Liability, and the Supply Chain of Truth

Platforms and model vendors aren’t bystanders. Ethical AI development against deepfakes means building with misuse in mind: prompt filters for obvious harms, rate caps for bulk synthesis, and safety classifiers that can flag Deepfakes in educational content misused to indoctrinate rather than teach. AI ethics boards on deepfakes do their best work when they have teeth—authority to delay or modify releases and to audit post-launch impacts, not just publish guidelines.

On the liability front, AI companies liability for deepfakes will firm up around foreseeability and mitigation. Did you know the tool would be used to cause targeted harm? Did you ship reasonable safeguards? Did you respond to abuse reports with speed? Those are the questions regulators and courts will keep asking. For industries downstream—advertising, media, fintech—the same logic applies. Use detection layers. Keep logs. Train staff. And recognize that delays for verification during red-alert windows are not red tape; they’re sanity.

The Human Layer: Skills Over Panic

Everyone wants a checklist; everyone deserves one. Here’s a lean set of habits to keep you safer without turning your life into an audit.

- Default skepticism for “perfectly timed” leaks, especially before votes or earnings calls.

- Listen for room tone and mouth noise; cloned audio often misses the messy bits.

- Favor primary sources: official channels with consistent provenance markers.

- If it provokes a strong reaction, pause. Strong emotions are the chosen vehicle for AI deepfakes and misinformation campaigns.

- Report, don’t repost. Platforms can’t act on what they don’t see.

When you do get hit, remember your rights. Privacy rights violated by deepfakes can be actionable; many jurisdictions provide emergency injunctions, and platforms increasingly honor authenticated takedown requests for non-consensual intimate imagery. Employers should offer support, not suspicion, when a worker is targeted. The duty of care is cultural as much as legal.

Inside the Lab: What We’re Building

Behind the scenes, the Role of AI in combating deepfakes looks like this: multimodal detectors trained on diverse corpora, provenance frameworks integrated into camera pipelines, and red teams that stage realistic attacks on our own systems. We vet Tools for verifying deepfake content not just on benchmark datasets but on live adversarial tests—cropped, compressed, re-uploaded, translated, layered with emojis, the whole messy stack of the internet.

We also push for clear reporting channels and transparency reports that outline how many items were flagged, how fast they were acted on, and what outcomes followed. It’s not perfect. But nothing moves public trust like visible, repeated action. In that sense, “GNTC” is just a metaphor for the networks—public, private, academic—that choose to fight back rather than shrug.

What to Teach and What to Regulate

Schools and community groups can turn fear into fluency. Media literacy education on deepfakes should prioritize case-based learning: here’s a fake, here’s how we knew, here’s the simple tool we ran, and here’s the call we made to report it. On the policy side, Regulatory frameworks for AI deepfakes should resist the temptation to outlaw the technology wholesale. Focus on harms, not the math. Protect satire and art. Hammer malicious impersonation, undisclosed political persuasion, and intimate abuse. And resource the agencies tasked with enforcement; a law unfunded is a rumor with a badge.

Edge Cases and Gray Zones

Not every synthetic is malignant. Deepfakes in entertainment industry can delight when they honor consent and context—a late actor woven into a scene with family approval, a documentary that reconstructs a voice with explicit labels and archives. Deepfakes in educational content can illustrate history, languages, or accessibility when disclosure is front and center. The line is bright: are you informing or deceiving? Are you empowering or stripping agency?

But even with consent, risk remains. A sloppy label or a mismatched deployment can lead to Deepfakes and fake endorsements or confuse archives used by future researchers. Stewardship requires humility: annotate clearly, publish methods, and keep source materials accessible for independent review.

A Quick Guide for Organizations

If you manage communications, elections, or payments, build a light, durable posture. Here’s a compact list you can steal.

- Adopt provenance: integrate content credentials in cameras and editing suites.

- Layer detection: combine forensic AI, provenance checks, and contextual analysis.

- Drill responses: tabletop exercises for election periods, earnings weeks, and crises.

- Harden approvals: multi-channel verification for voice requests and urgent transfers.

- Educate staff: short modules on How to spot AI deepfakes, backed by practice runs.

- Engage counsel: pre-plan for Legal consequences of creating deepfakes that target your organization or leadership.

Choosing and Using Verification Tools

When you evaluate detection tools, remember that no one app sees everything. Think family of tools, not silver bullet. For Deepfake detection apps reviews, consistent themes emerge:

- Evidence, not just scores: prefer tools that show artifacts or provenance trails, not only a probability number.

- Compression resilience: platforms re-encode media; your detector must survive that journey.

- Update cadence: models drift; tools need regular retraining to track Deepfake technology advancements 2026 and beyond.

- Privacy posture: ensure uploads are handled securely; sensitive clips deserve careful custody.

Pair these with platform-native authenticity signals. Some networks now let creators attach content credentials by default. Others experiment with friction—prompts that ask you to verify before resharing hot-button media. None of it is perfect; all of it is better than indifference.

Limits, Unknowns, and What Honesty Looks Like

We won’t win this by promising certainty. Forensics is probabilistic. Labels can be stripped. Watermarks can break. Attackers evolve. Honesty, paradoxically, is our strongest tool: saying “we’re 80% confident and here’s why” builds the muscle memory the public needs to weigh evidence. Over time, practices like AI-generated fake news detection become routine—not exotic wizardry, just a normal checkpoint like spellcheck once was.

Conclusion

The world won’t run out of liars, but it can run out of easy marks; that’s the pivot we need to make. The same networks that seed AI deepfakes and misinformation campaigns can be bent toward proof—devices that stamp origins, platforms that flag fakes, people who pause before they share. If there’s a gift in this messy moment, it’s clarity: we’re learning the difference between spectacle and evidence, between a synthetic face and a trustworthy source. Build habits, demand transparency, support smart regulation, and remember that the fastest thing online is still a human reaction—make yours count.