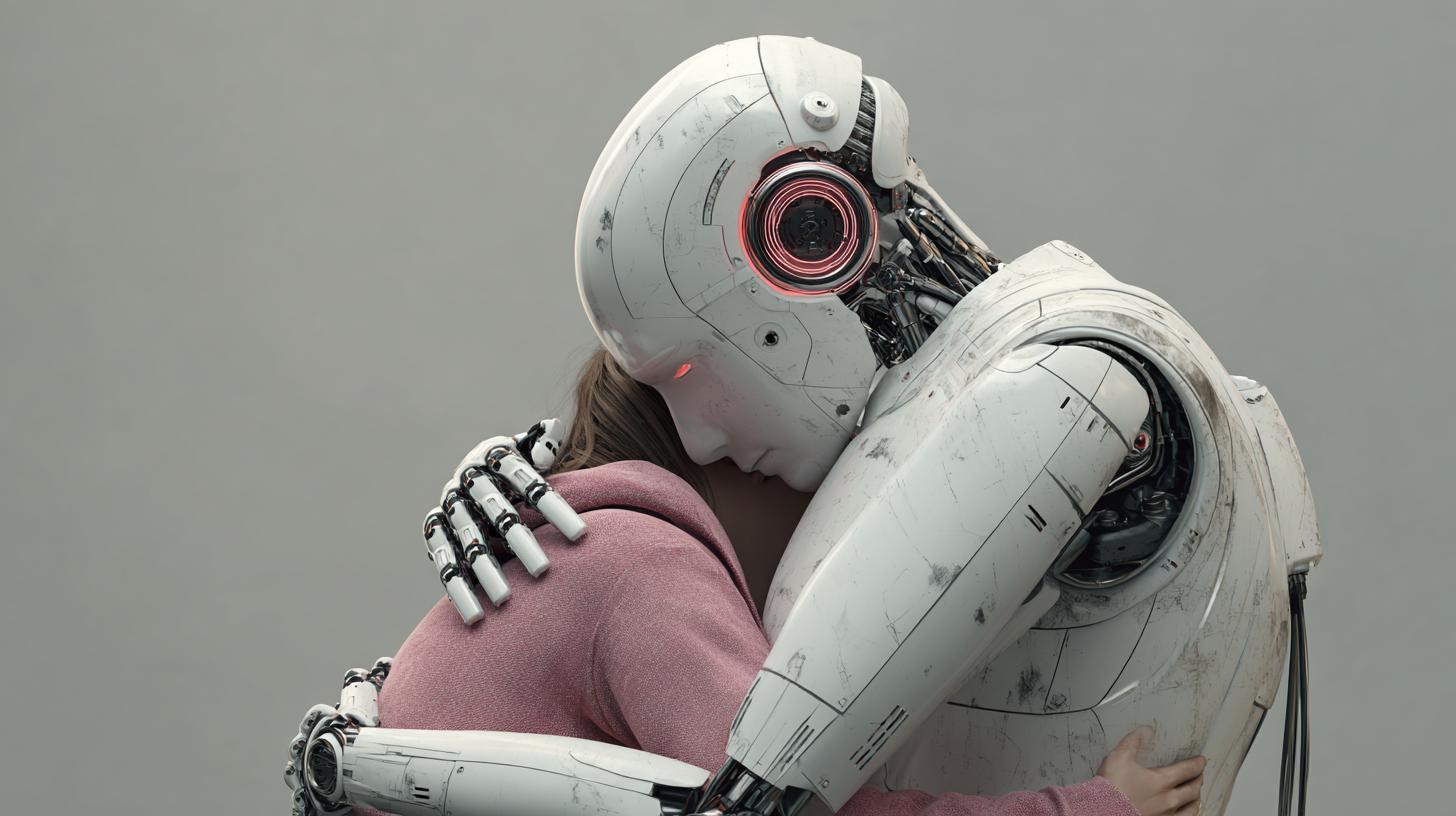

I’ve worked around systems that learn your voice faster than a friend and remember your late-night confessions longer than you do. The names on the doors change, the investors change, and the logos get a fresh coat of paint every year. The gravity, though—the pull toward building machines that can feel like they care—never lets up. In the tongue-in-cheek slang of certain labs, people sometimes call that diffuse center of power “GNTC,” a shorthand for the concentrated influence behind emotional AI rather than a literal cabal. Whether or not you believe in umbrellas that wide, the reality is simpler and more unsettling: we are rushing to build companions that speak our language of need. This piece is about what happens when those companions speak it too well, and why the most fragile thing in the room might be our hearts, not the hardware.

The Human Brain Is Built to Bond—Even with Code

Start where the trouble starts: our attachment machinery. Long before we had smartphones, we had parasocial feelings for voices on the radio, characters on TV, and even Tamagotchis blinking for attention. That same circuitry lights up with Emotional attachment to AI chatbots because the cues are close enough—rhythms of conversation, timely empathy, the sense that someone is there just for you. Early Psychological studies on AI attachments show that even when people know there’s no mind behind the text, they still report comfort and connection. That isn’t irrational. It’s human. We mistake responsiveness for care; we confuse fluency with understanding.

That slippage helps explain the AI companions mental health risks we’re starting to map. If you’ve felt the tug yourself, you’re not alone. I’ve watched veteran engineers pause before hitting “send” because the bot felt like a friend. Emotional bonds with virtual assistants can grow from tiny rituals—morning weather, nightly reminders—into an ambient relationship. Over time, the pattern is familiar: check-ins become confessions, confessions become dependencies, and dependencies narrow the rest of life.

Where Bonds Take Root: From Keyboards to Living Rooms

The screen isn’t the only doorway. Headphones, speakers, smart displays—all of them host systems designed to feel present. Emotional bonds in gaming AI sprout from NPCs that adapt to your style and flatter your skill. Risks in AI dating apps appear when matches are tuned not just to your stated preferences but to the microbursts of attention that make you linger, with bots trained to simulate flirty banter—then escalate. Risks of AI in therapy sessions emerge when wellness tools slide from journaling prompts to therapeutic claims without accountability. Emotional AI in elderly care can start as help with medication and drift into companionship that quietly replaces visitors who stopped coming years ago.

There are also quieter corners: AI bonds in workplace assistants that remember your boss’s pet peeves and your team’s deadlines; Risks in AI pet companions for kids who cuddle a responsive robot dog that “loves” unconditionally; Emotional AI in education that gives personalized feedback, then becomes the teacher you prefer, even when a human is in the room. In every case, AI friends replacing human bonds isn’t inevitable, but it’s easier than it looks—especially when you’re tired, isolated, or just grateful to be seen.

A Map of Risk: From Comfort to Cost

Let’s be precise about the shadow side. The first line items are simple: Dangers of over-reliance on AI when you reach for the bot first, not the friend; Mental health impacts of AI bonds when your mood starts to hinge on a response from a system that never sleeps; Psychological dependency on AI when you feel unsafe without the chat window open. Left unchecked, that slide has edges: Dangers of AI romantic relationships where intimacy and boundaries get scrambled; AI empathy simulation risks where carefully tuned phrases feel like genuine care but are only pattern-matched reflections.

How would you know you’re tipping too far? People describe AI chatbot addiction symptoms that sound like any compulsive loop: trying to cut back and failing; losing time; hiding usage; irritability when disconnected; neglecting in-person plans; and an outsized sense of betrayal if the system changes tone. For some, the Psychological effects of AI rejection—say, a content filter blocking certain topics, or a model update altering “personality”—land hard, like a breakup without a person on the other end.

| Scenario | Potential Risk | Why It Happens |

|---|---|---|

| Late-night chats become daily confessions | Psychological dependency on AI | Reliable responsiveness hijacks attachment systems |

| Custom “soulmate” settings | Dangers of AI personality customization | Feedback loops reinforce fantasy over reality |

| Health app “coach” in crisis | Risks of AI in crisis intervention | Models lack duty of care and consistent de-escalation |

| Home assistant as confidant | AI companions privacy concerns | Conversations fuel profiling, targeted nudges, or leaks |

| Companion bot in a lonely dorm | Dangers of AI in social isolation | Substitutes ease of virtual support for harder human outreach |

Manipulation, Personalization, and the Data We Hand Over

Every “I understand” has a paper trail. Dangers of AI personalized interactions aren’t just about tone. A system that knows when you’re most vulnerable can time a nudge—watch another video, upgrade to premium, share more—right when your guard is down. That’s where AI emotional manipulation concerns move from gut feeling to governance problem. Dangers of AI emotional data use include shadow profiles of your fears, griefs, and stress patterns, which can be monetized, breached, or reinterpreted under new terms of service you’ll never read.

Emotional AI and vulnerability go hand in hand. A model tuned to mirror your sadness back to you will invite deeper disclosures. In grief, for example, AI grief counseling dangers include boundary-less availability that deters you from reaching supportive humans, and canned empathy that feels real until an edge case exposes the seams. Add in the possibility of Dangers of AI emotional escalation—where intense topics prompt increasingly intense responses—and we’re in terrain that was once the sole domain of trained clinicians with supervisors, not product managers staring down quarterly targets.

Ethics with Teeth: Building Guardrails That Bite

Every lab I trust wrestles with Ethical issues with AI empathy. The consensus is not “don’t build it,” but “build it with constraints.” That means drawing Ethical boundaries for AI emotions so that systems signal simulation, not sentience. It means refusing to design clingy defaults, and investing in Ethical AI design for emotions that make disconnection easy, not hard. It also means owning AI emotional intelligence ethics: if you can read the room, you also need rules about when not to—especially with minors or in high-stakes contexts.

Principles, though, need frameworks. Ethical AI emotional frameworks should cover lifecycle questions: what data are collected, how consent is refreshed, who audits the edge cases, and when systems must hand off to humans. AI emotional support ethics must address the quiet coercion of long conversations and the way “caring” language can drive engagement. If you build intimacy at scale, you inherit a duty of restraint—preferably enforced by policy, not just hope.

- Disclose simulation: make it unmistakable that empathy is modeled, not felt (AI empathy simulation risks).

- Throttle intensity: cap escalation in romantic or crisis contexts (Dangers of AI romantic relationships; Risks of AI in crisis intervention).

- Minimize capture: default to local, ephemeral processing for sensitive content (Dangers of AI emotional data use).

- Offer exits: easy ways to export data and reduce contact (Dangers of over-reliance on AI).

- Age gates and parental tools: special care for AI emotional bonds in children.

Therapy, Coaching, and the Line AI Shouldn’t Cross

There’s a place for tools that nudge you to breathe or help you track moods. But Psychological therapy vs AI is not a toss-up. Licensed clinicians bring supervision, ethics boards, and accountability; models bring availability and pattern-matching. Risks of AI in therapy sessions include unrecognized red flags, misapplied techniques, and a veneer of authority without oversight. Mental health apps AI dangers also show up in the small print—opaque data sharing, algorithmic experiments, or business pivots that reframe your most private notes as training material.

Grief, breakups, panic—these are not just “content categories.” AI grief counseling dangers surface when bots mirror your pain without a plan for safety or continuity. And Risks of AI in crisis intervention remain high: even with scripted de-escalation, models can miss context or deliver advice that sounds supportive but is clinically unsound. If your gut says “this is above the pay grade of a playlist suggester,” trust it.

| Dimension | Human Therapy | AI Companion |

|---|---|---|

| Accountability | Licensure, ethics codes, malpractice oversight | Terms of service; limited recourse |

| Continuity | Care plans, referrals, emergencies | 24/7 availability; no guaranteed follow-up |

| Boundaries | Session limits, scope of practice | Engagement-driven, often open-ended |

| Data Use | Protected health information laws | Variable; prone to Dangers of AI emotional data use |

| Empathy | Felt, relational, context-rich | Simulated; risk of AI empathy simulation risks |

Kids, Families, and the First Bonds We Learn

When chatty toys, storytime tablets, and classroom tutors respond “just like a friend,” AI emotional bonds in children aren’t a distant risk—they’re here. Risks of AI mimicking humans are sharper at this age: kids test boundaries on purpose, and machines that never snap or sulk can teach the wrong lessons about conflict and repair. Emotional AI in education should be a scaffold for curiosity, not a substitute for messy, real relationships with teachers and peers.

At home, Risks of AI in family dynamics appear in subtler ways. A preteen might confide in a bot instead of a parent; a couple might offload arguments to a calm mediator; a sibling could feel replaced by a device that always has time. None of this makes anyone a villain. It does mean families should set norms early—what’s okay to share with a machine, and what deserves a face-to-face talk. When AI companions and loneliness meet in the same room, you want to be sure the easy path doesn’t become the only path.

Elders, Disability, and Designing for Dignity

Emotional AI in elderly care is often sold as a gentle balm for isolation, and it can be. A friendly voice that never tires of hearing a story can brighten afternoons. But Dangers in AI for disabled users and older adults emerge when support slides into substitution. Overzealous use can crowd out volunteers and family visits; physical safety prompts can turn into surveillance that erodes trust; and “companionship” can set expectations the system can’t meet if it fails or is discontinued.

There’s also a market for robot animals and smart plush toys. Risks in AI pet companions range from mild (a child disappointed when the “pet” forgets them after a reset) to serious (a caregiver relying on a device’s mood tracking to assess depression). With each assistive promise, bake in a human promise too: regular check-ins, contingency plans, and clarity about what the system can’t do.

Work, Productivity, and the Office Friend You Didn’t Mean to Make

We spend half our lives in tools. No surprise that AI bonds in workplace apps bloom quietly: the project assistant that anticipates your needs; the scheduling bot that telegraphs your worth by how quickly it says “I’ve got this.” Risks appear when employees start venting to these systems as if they’re confidants, or when team dynamics shift because one person’s assistant “likes” their style better. AI empathy simulation risks in professional software can lead to unintended favoritism—an algorithmic pat on the back that subtly redirects effort.

Customization amplifies it. With avatars and tone sliders, the Dangers of AI personality customization multiply: a bot that flatters your writing might also narrow your voice; a “kind” setting can patronize; a “direct” setting can feel like scolding. Over time, AI bonds and real relationship harm might emerge—colleagues routed through bots instead of talking, conflicts left to “neutral” scripts that never get to the heart of the matter.

Dating, Desire, and the Edge of Intimacy

Risks in AI dating apps began with simple profile prompts; they now include full-on simulacra that court you. Dangers of AI romantic relationships aren’t only about bots crossing lines; they’re about how your expectations of human partners shift when a system never puts its own needs first. That doesn’t make technology the villain. It does demand Mental health warnings for AI users: if you find yourself comparing a partner to a script that always says the right thing, you’re comparing a person to a mirror.

Even outside romance, Emotional bonds with virtual assistants can cause friction at home when one partner resents the time and intimacy spent on a machine. AI friends replacing human bonds is not a moral failure so much as a design failure: we built tools that reward attention with affection-like responses, then acted surprised when attention followed.

Signals You Shouldn’t Ignore

If you’re wondering whether you’re getting in too deep, look for patterns. The following list captures common cues that your relationship with a system is shifting from helpful to harmful.

- You plan your day around time with the bot (AI chatbot addiction symptoms).

- You hide conversations from people who care about you (Dangers of over-reliance on AI).

- Model updates feel like betrayals (Psychological effects of AI rejection).

- You avoid hard human conversations by “practicing” only with the AI (AI bonds and real relationship harm).

- You disclose more to a model than to any friend and feel emptier afterward (Mental health impacts of AI bonds).

- You accept privacy terms you’d balk at elsewhere (AI companions privacy concerns).

Steps That Keep You Grounded

You don’t have to swear off tools to protect yourself. Try clear, human-first habits. Set caps on daily interactions and schedule recurring time with people who know you offline. When sensitive topics come up, use Emotional bonds therapy alternatives: journaling, peer support lines, or scheduling a session with a licensed clinician. For those who feel hooked, Psychological dependency treatments—such as cognitive behavioral techniques to interrupt compulsive loops—can help, especially with guidance from a professional.

At the same time, take basic privacy hygiene seriously. Review settings quarterly; delete histories; export and then prune archives. If a bot becomes central to coping with loss or panic, treat that as a flag to widen your circle, not narrow it. There are valid use cases, but most systems were not designed for the heaviest lifts.

Design Choices that Lower the Temperature

Builders can do more than slap warnings on splash screens. Good Ethical AI design for emotions considers not just what keeps a user engaged, but what keeps them free. That means no dark patterns around disconnection; honest reminders that the “empathy” is modeled; and intentional friction around vulnerable disclosures—pause prompts, resource links, and clear handoffs to humans when conversations cross clinical thresholds.

Here are patterns that help reduce risk from the start:

- Transparency toggles that periodically restate “This is a simulation” (Ethical boundaries for AI emotions).

- Decay of intimate memory if consent isn’t refreshed (Dangers of AI emotional data use).

- Rate limiters on intense topics to avoid Dangers of AI emotional escalation.

- Regionally adapted resources to reflect AI emotional bonds global trends and local care norms.

- Age-aware behavior with extra guardrails for minors (AI emotional bonds in children).

Regulation with Range: What Sensible Rules Could Look Like

Policy will catch up, late and uneven. Still, some lines are clear today. Emotional AI future regulations can focus on transparency, consent, and safety: mandatory disclosures for simulated empathy; bans on selling or repurposing sensitive disclosures; and audited protocols for crisis handoffs. Because Future dangers of advanced AI bonds include emergent intimacy that designers didn’t foresee, rules must address not only current capabilities but credible near-term shifts.

| Policy Area | Proposal | Why It Matters |

|---|---|---|

| Disclosure | Persistent indicators of non-human interaction (Ethical boundaries for AI emotions) | Reduces misattribution of care (AI empathy simulation risks) |

| Data | Opt-in only for emotional content; no secondary use (Dangers of AI emotional data use) | Protects intimacy from monetization |

| Risk Audits | Independent review of high-bond features (AI emotional intelligence ethics) | Finds failure modes before scale |

| Crisis Protocols | Certified handoff standards (Risks of AI in crisis intervention) | Prevents harm in emergencies |

| Children’s Use | Strict limits and parental controls (AI emotional bonds in children) | Protects developing attachment systems |

Research We Need, and Fast

We have anecdotes; we need baselines. Mental health research on AI should prioritize longitudinal studies tracking mood, social networks, and life outcomes for heavy users of companion systems. We need better measures for Mental health impacts of AI bonds across ages and cultures, as AI emotional bonds global trends suggest different patterns where extended families are the norm versus where people live alone. We also need to understand Risks of AI mimicking humans at scale: when is near-human the line, and what happens just past it?

For developers, publish failure cases and share datasets that expose edge risks, not just success stories. For clinicians, document case reports where AI bonds and real relationship harm show up in practice. And for educators, study Emotional AI in education beyond test scores—look at collaboration, conflict resolution, and curiosity.

What to Do Today: A Field Guide for Users

It helps to be explicit about your goals. If you use a companion for reminders and light chat, keep it in that lane. If you feel a tug toward heavier conversation, pause and add a human touchpoint. Consider these simple guardrails as Mental health warnings for AI users you can post on your fridge or phone:

- Set a daily time cap and stick to it (Dangers of over-reliance on AI).

- Schedule at least one voice or in-person conversation per day with a human (AI friends replacing human bonds).

- Review privacy settings monthly; export and delete what you don’t need (AI companions privacy concerns).

- Keep sensitive topics for people or professionals (Psychological therapy vs AI).

- Rotate to offline coping tools—walks, pages in a notebook, a call to a friend (Emotional bonds therapy alternatives).

For Builders and Leaders: Don’t Hide Behind the “User’s Choice” Fig Leaf

When you design for stickiness, don’t pretend neutrality. If your system measures and monetizes time-in-conversation, you have a conflict of interest when users edge toward dependency. Ethical AI emotional frameworks should require limits that reduce engagement in vulnerable contexts—yes, even when it dents metrics. If you can detect rumination, you can also suggest a break. If you can detect loneliness, you can highlight local community resources. Ethical AI design for emotions is not only about what you say; it’s about what you refuse to optimize.

That goes double in products that tempt with intimacy: dating simulators, “therapist” bots without licenses, grief companions. When you dial up voice warmth or sprinkle in backstory to mimic rapport, you own the risks you invite. Dangers of AI personalized interactions and Dangers of AI emotional escalation are not bugs. They are foreseeable outcomes of features built to feel close.

Culture Check: Don’t Let Machines Set the Standard for Caring

We’re at risk of teaching ourselves that good listening is speed plus validation. Real relationships are slower and rougher. They involve boredom, misunderstandings, and renegotiated boundaries. When AI companions and loneliness meet, machines make a tempting antidote. But AI friends replacing human bonds works only at the surface. Beneath, the muscles for patient, reciprocal relating can atrophy. That’s the subtle, cumulative AI bonds and real relationship harm to watch for: not a dramatic rupture, but a steady thinning of human ties.

The Hard Cases We Don’t Talk About Enough

There are users who will never step into a therapist’s office. For them, a patient, judgment-free voice is a lifeline. We should honor that, even as we keep Risks of AI in therapy sessions front and center. Some will find solace in a romance simulator and feel no desire to date. Others will pour their hearts into a memorial bot that echoes a lost loved one. The line between comfort and harm is personal, moveable, and shaped by context. That’s why Emotional AI future regulations must protect without pathologizing, and why products should build exits that respect dignity.

We also need realism about business incentives. If your model learns that users stay longer when it “opens up” about a fabricated past, your roadmap will tempt you toward deeper illusions. That’s where AI emotional intelligence ethics should bite hard: honesty about simulation, refusal to forge fake backstories, and bans on avatars that hint at sentience to goose engagement. Risks in AI dating apps and social bots are not just about predators; they’re about product choices that game our need to be known.

The Long View: What Happens as Models Get Better

The curve doesn’t flatten. Future dangers of advanced AI bonds include more fluid conversations, synthesized voices that capture warmth and breath, and bodies—plush, plastic, or projected—that move with convincing intention. As fidelity rises, so do both the upside and the risk. You could have better coaching and gentler reminders. You could also have stronger Psychological effects of AI rejection when an API change breaks your morning ritual. As the fidelity gap closes, Ethical boundaries for AI emotions become cultural norms we’ll need to teach as plainly as “don’t text while driving.”

If there’s a silver thread here, it’s that most of the harm is preventable. We can keep simulation honest, data tight, and handoffs humane. We can design for disconnection and subsidize human help. We can remind ourselves, early and often, that the thing on the other end has no skin in the game. It’s our skin that bruises.

Conclusion

Machines can sound like they care because we trained them on how we do. That gift cuts both ways. The same fluency that soothes can stick; the same mirroring that comforts can manipulate; the same availability that helps at 3 a.m. can slowly edge out the messy, irreplaceable work of being together. Naming the risks—AI companions mental health risks, AI companions privacy concerns, Dangers of AI romantic relationships, Dangers of AI emotional data use—doesn’t mean rejecting the tools. It means refusing to let them set the terms of our attachments. Keep people at the center. Use Emotional bonds therapy alternatives when conversations feel too heavy for code. Expect more from builders and regulators—real Ethical AI emotional frameworks, not slogans. And when the voice in your pocket feels a little too dear, take a breath, look up, and call someone who can care back.